|

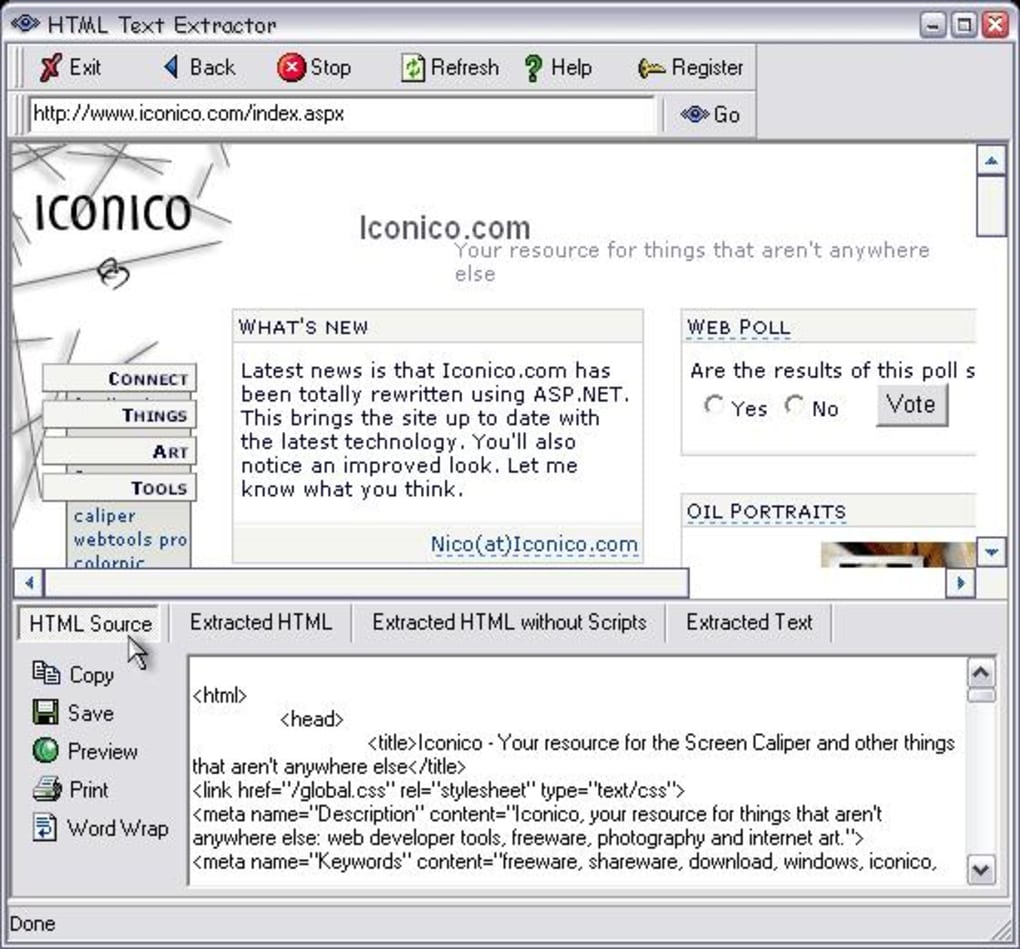

The rules listed in the robots.txt file apply only to the host, protocol, and port number On HTTP and HTTPS, crawlersįetch the robots.txt file with an HTTP non-conditional GET request on FTP,Ĭrawlers use a standard RETR (RETRIEVE) command, using anonymous login. Google Search, the supported protocols are HTTP, HTTPS, and FTP. The URL for the robots.txt file is (like other URLs) case-sensitive. You must place the robots.txt file in the top-level directory of a site, on a supported If you're new to robots.txt, start with ourįrequently asked questions and their answers. js, but Google needs them for rendering, so Googlebot is allowed # All crawlers are disallowed to crawl files in the "includes" directory, such # This robots.txt file controls crawling of URLs under. A robots.txt file is a simple text file containing rules about whichĬrawlers may access which parts of a site. If you don't want crawlers to access sections of your site, you can create a robots.txt file This page describes Google's interpretation of the REP.

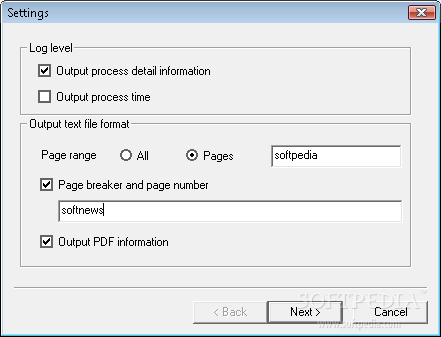

Subscriptions), or crawlers that are used to increase user safety (for example, malware Isn't applicable to Google's crawlers that are controlled by users (for example, feed Robots.txt file to extract information about which parts of the site may be crawled. This means that before crawling a site, Google's crawlers download and parse the site's Brilliant for competitor research.How Google interprets the robots.txt specification "The XML Sitemap URL Extractor makes URL extraction from sitemaps a breeze. With these simple steps, the XML Sitemap URL Extractor makes it easy for you to obtain a list of URLs from any XML sitemap for further analysis or use in your projects. Once you have the extracted URLs displayed in the output textarea, click the "Export to CSV" button to download a CSV file containing the extracted URLs.The extracted URLs will be displayed in the output textbox.Click the "Extract URLs" button to process the XML sitemap contents and extract the URLs.If you prefer to paste the XML sitemap content directly, you can do so in the input textarea labeled "Or paste your XML sitemap here".The CORS Anywhere is a popular proxy designed to bypass the browser's same-origin policy. Please ensure you enable the CORS Anywhere proxy to avoid such issues. Note: If you encounter a "NetworkError" or "Forbidden" error while loading the sitemap, it may be due to the browser's same-origin policy.The content will be displayed in the input textarea. Click the "Load Sitemap" button to fetch the XML sitemap content.Open "" and click on the "Request temporary access to the demo server" button to enable the CORS Anywhere proxy for your session.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed